![]()

Nowadays, Multi-Factor Authentication (MFA) is the topic that is discussed everywhere, at least within the IT industry. It seems to be the new panacea of security. This series of articles explains what it is, how it works, and how it can help to make systems more secure. As one might already assume, it is not the general problem solver to all security issues. ;)

Before we can explore the world of MFA we should discuss the topic of authentication in general. There are several keywords in the field of security which are (unfortunately) often misused as buzz words to make things sound more secure or more robust against attacks. Here are some examples: encryption, authentication, identification, certificates, public key infrastructure, and many more. Of course, all of those can be generally described in a single sentence but for a sound knowledge you have to look under the hood. Misconceptions may render a system insecure. So let’s investigate authentication in more detail.

Authentication is the task to prove that something is authentic. Meaning that this “something” is the primordial entity. It is not a copy or somehow modified or looks like the original but isn’t, and similar. Let’s give some practical examples.

All these are common examples which everybody is familiar with. Unfortunately even these simple examples are based on some presumptions which are probably not obvious at a first glance. All these examples are different on one hand but on the other hand from an abstract point of view they are similar or even equal. These 3 examples authenticate usually a person to some other entity. The actual task of authentication can be seen as the authentication mechanism. In the 1st case this is signing a letter with a signature, in the 2nd case it is showing the passport to an officer, and in the 3rd case it is providing a username and a password, usually known as the credentials.

All 3 examples use a specific unique property for the authentication proof: the signature, the passport, and the password. This property is called the authentication factor. In most cases the authentication factor is physically detached from the object (i.e. the person, the owner) it belongs to and because of this it has to be made sure that there is a unique association between the authentication factor and its owner. Physical signatures are assumed to be unique. Actually this is really almost true but a secure proof would involve complex technical measurements of writing speed, the pressure of the pen on the paper, detailed analysis of each written character, and so on. As soon as the signature is scanned to a digital document all these unique properties are lost, thus, the proof doesn’t work anymore.

Passports use photographs to be associated with a person and modern passports even carry fingerprints or other biometric features. They associate the person with the document. Keep in mind: we’d like to authenticate the person but not the document.

The login credentials are assumed to be unique and authentic because the password is stored in someone’s head which cannot easily be “detached” from his mind.

However, nothing is perfect. All these authentication factors could be forged or get lost and found by some other person. History has shown that this happens. All authentication factors have weaknesses.

“We cannot exchange secrets without a (pre-)shared piece of common knowledge.”

From the security point of view there is another important issue with all authentication factors: there has to be a shared piece of common knowledge between the two parties. If you write a letter to an unknown person, he will not be able to check your authentication proof. Even if the signature is valid, how could he check?

Why does the customs officer accept your passport even if he doesn’t know you personally? Can’t you just print out your own self-made passport at home? No, of course not. This is because there is a pre-shared knowledge. It is that the customs officer knows how passports from specific countries look like (and he knows about all the security features within the passport).

Facebook checks your password in its database because you registered sometime before. Thus, you share a common secret with Facebook.

This initial contact in the IT world often is known as enrollment. This is an extremely sensitive part of the whole security system. Engineers, administrators, developers and other people being involved into design and/or implementation of such enrollment mechanisms should be very careful with this part.

To wrap this up: authentication is the proof of one’s authenticity. The proof is done with a specific authentication mechanism by the use of an authentication factor. If we have just one such factor it is called single-factor authentication. A rule of good IT security is “Be as paranoid as possible!”. Thus, if we assume that this single authentication factor got compromised, the attacker could authenticate as someone else.

So if we use not just one but two (or more) different authentication factors for the same authentication task it is called two-factor authentication (2FA) or multi-factor authentication as the title of this article says. That does not automatically mean that a MFA system cannot be bypassed but the probability that both factors get compromised at the same time is much lower.

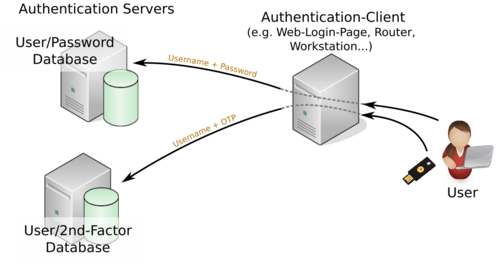

Fig. 1 shows the basic concept of how MFA works. On the right side there is the user trying to prove its authenticity. He sends his credentials to the service where he tries to log in. This is shown in the center of the diagram denoted as Authentication Client. The credentials usually consist of a username and a password, the latter being the 1st factor. Because MFA is used the user also supplies a 2nd secret factor. This could be just another password but most often this is an algorithmically derived one-time password (OTP). We will immediate elaborate more on that.

The authentication client (the web page) now verifies both factors by sending the credentials to the authentication servers on the left side of the diagram. As shown, these are separate steps and can be carried out independently of each other. The login process will be successful only if both authentication servers respond with a successful authentication (meaning the credentials are correct).

Since the authentication processes for each factor are independent it should become clear that it basically is not a big deal to implement e.g. three-factor authentication.

Practically, some questions should arise from this diagram:

In the following we’ll try to briefly introduce these issues.

This 2nd authentication factor is just another secret, such as the 1st one typically is a secret password. There are some options to choose this 2nd factor. It could be a biometric feature but although they are heavily used at least in sci-fi movies they introduce several security-related issues.

Practically in use are some kind of hardware devices that generate either a static code or a one-time password (OTP). The latter is the generally accepted solution for good security. But there is not just a single solution, it can be implemented in several ways.

The basic idea is as follows: a device generates an “endless” stream of numbers, one by one, based on some algorithm. The actual sequence, so which numbers appear, depends on a unique secret that was initially set either in hardware or software. Only somebody (or some computer) who knows the same secret is able to generate the same sequence of numbers. Thus, if two independent entities generate the same sequence, they share a common secret.

The OTPs are sometimes called tokens and the device which generates them is called the token generator, or the token card, or sometimes also just the token. Actually, these terms got a little bit mixed up since the technique became more wide-spread and ordinary people deal with it, not just crypto experts. The token generator may not necessarily be a separate hardware device. It is even more widely implemented and used as OTP apps on Android or iOS.

And this is how OTP authentication works: initially, the token (generator) – or the app – has to be initialized before it can be used. This initialization actually is the key exchange, meaning the generation and exchange of the secret between the token and the authentication server. It does not work without this initial step, simply because you cannot hide data with an unknown secret.1

At the authentication stage (e.g. the login process) the user provides the password which is checked as usual and additionally he provides this 2nd factor which is a number (OTP) derived from the token generator. At the same time the token authentication server generates a number as well based on the secret which was initially set up. If this number matches the one provided by the user, the user is successfully authenticated.

There are some tokens around which seem to work without initialization but that’s not really true. In these cases the secret is either somehow e.g. printed on the device in the way of an ID number, or the secret was pre-shared with a cloud service at the production stage of the device.

Anyway, as already mentioned there are several implementations and algorithms around. A detailed analysis would by far exceed the scope of this article.

A general misconception is that if MFA is being used, nothing else can go wrong. Obviously, that is not true ;) There are some attack vectors in general:

That’s some / several attack vectors and of course it depends on what exactly the goal of an attacker is. All three communication lines could be attacked.

The token generator is a piece of hardware. Either some specialized hardware device or smart phone. However, they may get stolen or lost and the smartphone may be compromised by malicious software.

As of good security the communication lines should of course be cryptographically secured. But that doesn’t make them immune to attacks, e.g. Man-In-The-Middle (MITM). If an attacker is able to successfully launch a MITM attack against the user he could reveal both factors. At first glance one might say that this doesn’t matter because the 2nd factor is an OTP and cannot be reused. Although this is true the attacker then also knows the 1st-factor which is just a regular password. And this in turn means that, if the password was not chosen thoroughly, the attacker may use it at some other service to log in. You definitely may read our article series about Password (In)Security.

All other communication lines could be attacked as well. One might think that they are more secure because they are kept within an internal network. That may also be true. But in some cases cloud services are used for the authentication of the 2nd factor. That means that this 2nd factor authentication server resides somewhere on the Internet. As a consequence the authentication client has to communicate across the Internet, a way that could definitely be attacked with several methods.

Of course, the servers themselves may be attacked and compromised in which case the attacker has no need anymore to attack neither the password nor the 2nd factor. However, in this case the attacker probably will be able to read the password database and he either may use it himself or he may sell it. In either case it then depends on the software design and the choice of the passwords if they are really lost as was described in our article serious on password security.

This article introduced the concept of multi-factor authentication, what it is and how it works. MFA improves security because it makes single-factor authentication stronger.

But obviously, it is not a general problem solver to all security issues. There are some attack vectors and once compromised the consequences are the same as if single-factor would have been broken.

As a general rule for users: do not weaken your 1st factor (the password) just because MFA is activated! And for system architects, rethink your network and software design! It’s a tripping hazard to think everything is fine just because MFA is deployed.

Many public services today offer MFA as an additional authentication method.

I’d definitely recommend to use it because it makes the login process more secure.

Bernhard R. Fischer started his career as a network engineer responsible for building up a national IP network and later developing and maintaining the major Internet services for this network such as DNS and Email. After his studies of business economics and information systems on the University of Vienna he became a researcher and teaching fellow on the University of Applied Sciences of St. Pölten in the fields of computer networks, operating systems, and IT security. Since 2017 he is employed as a senior security consultant at Antares-NetlogiX GmbH.

During his whole career Bernhard contributed to a lot of public projects and discussions, attended conferences as a speaker, and voluntarily supported numerous open source projects not just as an advocate but as software engineer and developer. He always placed emphasis on robustness and quality of software and systems. He painstakingly investigates the details of everything and he willingly passes his knowledge to everybody who is interested.

Some of his own public projects can be found on Github, some articles and opinions on his personal Tech & Society Blog, and on Twitter. Bernhard is also into sailboat sailing and runs his own professional web site and produces the technical podcast Schiff – Captain – Mannschaft which is about sailing, seamanship and sailboats.

[1] Yes you can but we won’t ever be able to recover it ;)